AI: Reversion to the old days - Software as a product (SaaP) [POST-2]

How the AI revolution will force the creation of a new breed of software companies selling products not services

In the first post we explored why SaaS and why it is considered the king of business by many venture capitalists. Link to the article: POST-1

Now as part of this series we shall ask how and why AI businesses are different and what challenges are present in running them today.

The king is dead, long live the king

The main argument of the original a16z article [LINK] revolves around the conclusion that AI companies look more like service providers than ‘classic’ software companies (aka SaaS) or the gold standard for VCs. This is drawn from their observations of the following properties of AI companies:

Lower gross margins due to heavy cloud infrastructure usage and ongoing human support;

Scaling challenges due to the thorny problem of edge cases;

Weaker defensive moats due to the commoditization of AI models and challenges with data network effects.

Effectively a number of companies have attempted to sell AI as SaaS, to date that has not been successful on average. Let’s dive a little below the surface and see if these 3 features are actually true.

Lower gross margins - infrastructure sucks (um yes and no)

The authors claim that 25% lower margins are attributed to training and running AI models in the cloud. Overall this is true, sort of.

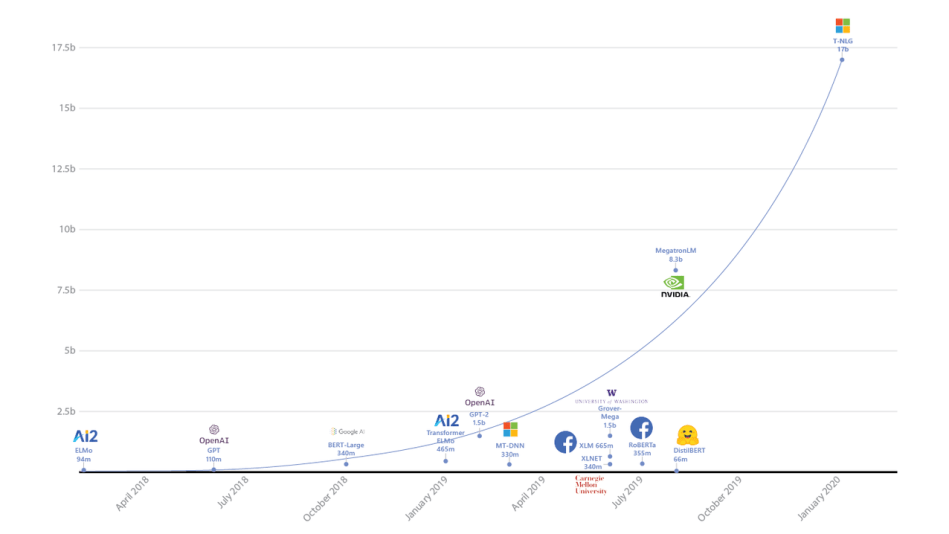

That models are also growing very quickly in parameter sizes, effectively requiring better and more cards (video memory). Check out the graph below:

As you can see the exponential curve in parameters of common NLP models. GPT-3 is not even on this chart - it’s at 175B parameters 😒. What this graph tells any researcher in the field is that the first thing to try is the lazy one, aka throw more compute at a problem and see where the ceiling is (assuming you have a $1 Billion to burn on machines). Maybe AGI is just a few 100B away 😩.

The irony of this will be discussed in a more technical post, but let’s just say those big models are actually not very efficient, aka you don’t need all those parameters at all - we just don’t know how to train them any better today. So people are paying tons of money to build the biggest model, when a large portion of what is trained it is actually worthless. But let’s not dive down that rabbit hole.

So what does this mean for compute costs. Well, if you look at this at face value and you decide to train your own GPT-3 in the cloud you will spend a bunch of money. So general idea of infrastructure to train state of the art ML is true.

But, if you are planning on spending $4.6M on training a model like GPT-3 in the cloud (this is the largest GPT-3). You should probably consider buying 30 DGX-1s at $150K each 😭) for $4.5M giving you about 30 petaFLOPs to play with. GPT-3 is not even on the chart above, it takes about 3.14E23 FLOP to train- 1 petaFLOP is 1E15 😒 [LINK]. So it would take our ‘little’ cluster ~121 days to train this model (assuming the estimates on training are not inflated…).

It is clear that any sensible person would not train a large model on a 3rd party cloud because instead of paying $4.6M to train 1 model one time, you can train as many as you want for $4.5M (not to mention, I am sure Nvidia will offer you a bulk discount).

This is why OpenAI (owner of GPT-3) partnered with Microsoft. So they could side step building and maintaining clusters and have the amount of compute they need at their disposal.

As an aside, OpenAI is charging x55-x60 compute cost to use their model, and it is being sold per n number of processing runs (aka tokens if you’re familiar with NLP models). Not sure who is buying it, but if you’re interested you can get it here : [LINK]

So back to margins. Yes, if you are a VC funded company that needs to quickly scale and grow (as investors would like), you will need to burn significantly more cash on AWS. If you are running a business where you can allocate capital intelligently or partner, and can afford the time to build a defensible moat and invest in compute hardware, your margins will be commensurate to your investment in infrastructure. In other words, it that AI companies are starting to resemble software companies who care about resource management in developing their products. While considerations around operating costs and capital investments need to be factored into justifying building or growing, it is not growth at all costs. These are not social media companies running servers to serve a website. Inherently what this can mean is that AI companies are hard to fit into the VC model.

The final point that is made is the reliance on humans in the loop for high degrees of accuracy. Again this is a narrow view of the larger problem. Business should be built around what technology can service given the constraints of cost, time effort etc. One can build a very nice car for $500K, but very few people can buy it. Or one can build a car for $20K and they have a much larger target market. Similarly, you can invest human labor into your models making them more expensive or you can make less precise models and serve a different client…

Scaling Challenges — Yes it is hard to scale AI

Put another way, users can – and will – enter just about anything into an AI app.

Yes this is very true for users of AI apps as well as users pretty much any form of technology. Good technology is built to be idiot proof.

The argument that scaling is challenged by edge cases is over simplified. Edge cases challenge all software. The problem is effectively because AI is a statistical system, edge cases live on a distribution and are not discrete. Ergo, it is very hard to write good one off heuristics for them without affecting the rest of the system in a negative way. In older software such as databases, caches, and search, rule sets can be introduced to handle specific occurrences that would lead to breakage. These edge cases are usually easily predictable.

In engineering software rule sets can be introduced to handle specific occurrences that would lead to breakage. These edge cases are usually easily predictable. For example look at Google Search- it accepts any input and handles it gracefully. If I put in [asidhoadshjaisdhiuasdhaisoduhaisd2], Google responds with:

While with ML, if you put in noise into a learning model, it’s hard to heuristically predict outputs for the high dimensional manifold. For example, you may have not tested a picture of animals which may cause the model to output a label people. This happened to Google Image Search [LINK]. Google’s heuristic solution was/is to remove the term altogether from all Image Search. Yet, for the majority of classes Google Image Search works reasonably well, in other words Google was able to handle an edge case just fine (even though it wasn’t graceful).

Scaling issues arise with ML and AI if the wrong models or systems are built for the job. The example provided in the write up of a video model built to detect manufacturing defects being sensitive to camera placement simply implies you’re doing it wrong, unless in this case the cameras never move. If your models are going to be exposed to variable environments, train models that are better at generalizing to those environments, or develop approaches to constrain the problem in the environment. We don’t use screw drivers to hammer in nails, you don’t need to use YOLO [LINK] for every object detection/video problem.

Conclusions

At the end, the article leads to a few recommendations for founders building AI businesses:

Plan for high variable costs.

Embrace services

Plan for change in the tech stack

Build defensibility the old-fashioned way

The most important one IMO is the last one they provide. If you haven’t read the article what they mean by building defensibility the ‘old fashioned way’ is :

good products and proprietary data almost always builds good businesses

Their point continues to say that AI businesses seem more like service businesses and not VC SaaS like with recurring revenue. The difference being that they deliver their products in non-SaaS like ways.

Most B2b contracts especially on the larger side carry both recurring and service based costs. This is true for any company I am familiar with, especially when you cross into the 6 figure deal sizes. This is not stated commonly in SaaS because many SaaS companies serve down market, their dead are much smaller. Effectively the distribution of recurring vs service revenue is much more correlated with deal size.

Furthermore, I find it ironic that the idea that good products = good business, is the end recommendation. As if it is some sort of novel twist for business. It is as if the growth hacking mindset has blinded the authors to the goal of technology products: to provide value to users and, in turn, have them pay for the aforementioned value you provide. If what defines your product's value is the utility it brings to users and does not create network effects or compounding mechanisms this does not make it a bad product. But it may make it a less attractive business for an investor who has a 3 year time horizon for a follow on fundraise, to offset risk and book paper returns.

I understand that this ‘old-fashioned’ business may not fit perfectly within the confines of VC backed ideals, but what happened to building technology to solve hard problems for customers and having them pay you for that? Perhaps it’s time for venture capital to also revert to expecting startups to focus on long term value creation for users and by that proxy providing returns to investors.

I remain hopeful that a set of new and exciting companies are taking on the challenges presented by AI, as well as many other technical areas. I’m inspire by these companies trying to break new ground. And hopefully in doing so they will forge a path for others to follow.